Multimodal Content Publishing

With the latest multimodal models, such as OpenAI GPT-4 Vision, the Graphlit Platform can be used to repurpose not just textual content, but images and video as well.

One potential use case is inspection reports of apartments. Often, an apartment manager will take move-in and move-out photos of an apartment for their records.

We can use the power of the GPT-4 Vision model to analyze these images, and use Graphlit's content publishing capabilities to automatically generate a written inspection report for the apartment manager.

Together, Graphlit and GPT-4 Vision support automated workflows for multimodal unstructured data, which can easily be incorporated into any vertical AI application. Graphlit enables capabilities with just a few API calls, which would takes developers weeks if not months to build themselves.

Create Specification

First, we need to create an LLM specification for the publishing process. We are using the GPT-4 Turbo 128k model to get the best output quality for the inspection report. Also, we are setting the temperature to 0.5, which gives a nice creative balance. You can adjust these parameters to your liking, for your specific use case.

Mutation:

mutation CreateSpecification($specification: SpecificationInput!) {

createSpecification(specification: $specification) {

id

name

state

type

serviceType

}

}

Variables:

{

"specification": {

"type": "COMPLETION",

"serviceType": "OPEN_AI",

"openAI": {

"model": "GPT4_TURBO_128K_1106",

"temperature": 0.5,

"probability": 0.2

},

"name": "Apartment Inspection"

}

}

Response:

{

"type": "COMPLETION",

"serviceType": "OPEN_AI",

"id": "1750c1d4-cd16-41cd-ab2d-8dddf25472e1",

"name": "Apartment Inspection",

"state": "ENABLED"

}

Create Workflow

Next, in order to analyze images with the GPT-4 Vision model, we need to opt-in to image analysis by assigning enableImageAnalysis to true.

We are selecting the HIGH detail level from the GPT-4 Vision model, which gives the most detailed description of images. It does incur a higher credit usage, but the quality difference is noticeable.

Mutation:

mutation CreateWorkflow($workflow: WorkflowInput!) {

createWorkflow(workflow: $workflow) {

id

name

state

}

}

Variables:

{

"workflow": {

"preparation": {

"jobs": [

{

"connector": {

"type": "IMAGE",

"image": {

"enableImageAnalysis": true

}

}

}

]

},

"extraction": {

"jobs": [

{

"connector": {

"type": "OPEN_AI_IMAGE",

"openAIImage": {

"detailLevel": "HIGH"

},

"extractedTypes": [

"LABEL"

]

}

}

]

},

"name": "Apartment Inspection"

}

}

Response:

{

"id": "2c7da5e7-cc7b-4541-a5b8-bded7e0871ac",

"name": "Apartment Inspection",

"state": "ENABLED"

}

Note, the specifications and workflows can be reused across other use cases, and don't need to be created each time you are publishing content.

Apartment Inspection Images

We've created a feed to ingest a set of images taken by the apartment manager. We won't go into the details here, but you can read more about creating a feed in the Graphlit documentation.

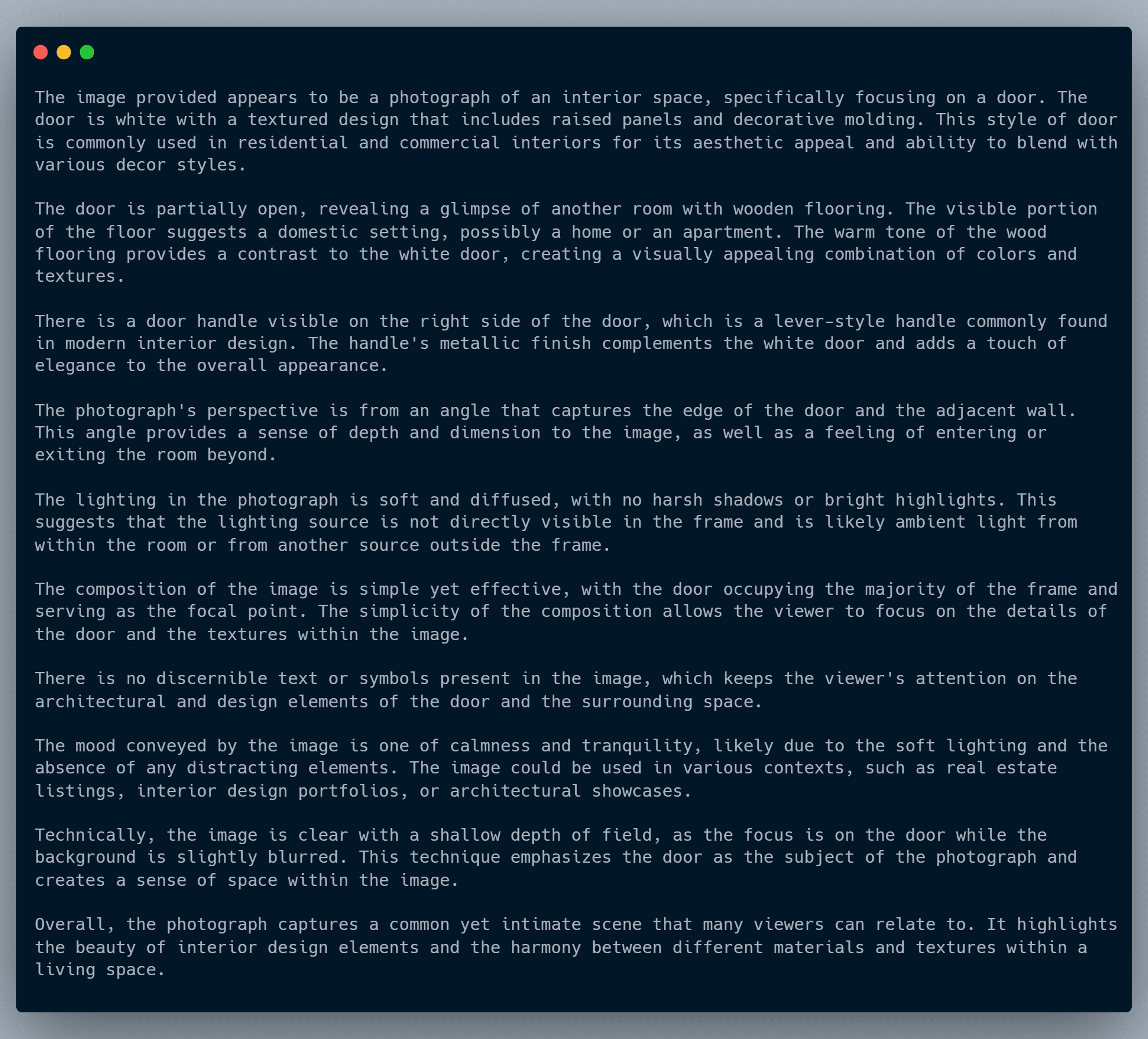

Here is an example description of one of the images, using GPT-4 Vision, and our default Graphlit image analysis prompt.

Publishing From Image Descriptions

Now that we have the images ingested into Graphlit, with their descriptions generated by the GPT-4 Vision model, we can leverage the publishContents mutation to summarize and publish our inspection report as a new content item.

Here we are telling the model to generate Markdown content, but you can generate text, Markdown or HTML with this publishing process. Also, you will need to specify the publishSpecification that we created above.

We used this prompt to generate the inspection report for our apartment manager, but any publishing prompt can be used for your needs.

I'm an apartment manager, and need to write an inspection report about this apartment.

Respond in separate sections, each highlighting the main bullet points and summarized in a paragraph or two.

Follow these steps.

Step 1: Summarize what you can tell about each room.

Step 2: Write an overall summary of the apartment. Identify key features of each room, such as the kitchen and bathroom. Be detailed and call out things like the floor type and appliances.

Step 3: Highlight any damage which may need repair.

Mutation:

mutation PublishContents($summaryPrompt: String, $publishPrompt: String!, $connector: ContentPublishingConnectorInput!, $filter: ContentFilter, $name: String, $summarySpecification: EntityReferenceInput, $publishSpecification: EntityReferenceInput, $workflow: EntityReferenceInput) {

publishContents(summaryPrompt: $summaryPrompt, publishPrompt: $publishPrompt, connector: $connector, filter: $filter, name: $name, summarySpecification: $summarySpecification, publishSpecification: $publishSpecification, workflow: $workflow) {

id

name

creationDate

owner {

id

}

state

uri

text

type

fileType

mimeType

}

}

Variables:

{

"publishPrompt": "I'm an apartment manager, and need to write an inspection report about this apartment. Respond in separate sections, each highlighting the main bullet points and summarized in a paragraph or two. Follow these steps. Step 1: Summarize what you can tell about each room. Step 2: Write an overall summary of the apartment. Identify key features of each room, such as the kitchen and bathroom. Be detailed and call out things like the floor type and appliances. Step 3: Highlight any damage which may need repair.",

"connector": {

"type": "TEXT",

"format": "MARKDOWN"

},

"filter": {

"feeds": [

{

"id": "363bcfec-db1d-42dc-afb5-29d84018b67c"

}

]

},

"publishSpecification": {

"id": "1750c1d4-cd16-41cd-ab2d-8dddf25472e1"

}

}

Response:

{

"type": "TEXT",

"text": "## Kitchen Inspection\n\n- **Cabinetry**: The kitchen features modern cabinetry with a minimalist design, including sleek handles and a neutral color palette.\n- **Countertops and Backsplash**: Countertops are adorned with tiles and finished with a bullnose edge, complemented by a tiled backsplash that matches the cabinetry.\n- **Sink Area**: The sink area is characterized by a minimalist aesthetic with a modern faucet.\n- **Flooring**: The kitchen boasts a checkered pattern black and white tile floor, contributing to a classic and somewhat retro aesthetic.\n- **Appliances**: Notable appliances include a white electric stove with four burners, positioned without any clutter around it.\n- **Lighting**: The kitchen benefits from soft and even lighting, enhancing the modern design.\n\nThe kitchen presents a modern and minimalist design with clean lines and a simple color palette. The cabinetry is contemporary, with functional handles and a neutral color that blends well with the overall design. The countertops and backsplash are tiled, providing a cohesive look. The sink area is notably uncluttered, adhering to the minimalist theme. The black and white checkered floor adds a classic touch to the space. The white electric stove is the main appliance, and its uncluttered placement suggests a focus on functionality. The lighting in the kitchen is soft and even, contributing to the room's welcoming atmosphere.\n\n## Bathroom Inspection\n\n- **Fixtures**: The bathroom includes a sink, toilet, and traditional alcove style bathtub with a tiled surround.\n- **Flooring**: The floor is covered with small hexagonal tiles, maintaining a consistent white color scheme.\n- **Walls**: Walls are painted in a light color with a green and red border adding a touch of color.\n- **Storage**: A mirrored medicine cabinet is present above the sink for storage and grooming activities.\n- **Lighting**: Soft and diffused lighting likely comes from an overhead source.\n- **Mood**: The bathroom conveys tranquility and cleanliness with its monochromatic color scheme and minimalist aesthetic.\n\nThe bathroom maintains a clean and bright atmosphere with its white hexagonal-tiled flooring and light-colored walls accented by a green and red border. The fixtures include a wall-mounted sink without a vanity, a close-proximity toilet, and a traditional alcove bathtub, suggesting space efficiency. A mirrored medicine cabinet provides storage and functionality. The soft, diffused lighting from an overhead source enhances the serene mood of the bathroom. The minimalist design and monochromatic color scheme contribute to the room's tranquil and uncluttered appeal.\n\n## Overall Apartment Summary\n\nThe apartment exhibits a modern and minimalist design aesthetic throughout, with a focus on functionality and clean lines. Key features include:\n\n- **Kitchen**: Modern cabinetry, tiled countertops with bullnose edge finishing, minimalist sink area, checkered black and white tile flooring, white electric stove, and soft, even lighting.\n- **Bathroom**: White hexagonal tile flooring, light-colored walls with a decorative border, essential fixtures including a sink, toilet, and alcove bathtub, mirrored medicine cabinet, and soft overhead lighting.\n\nThe apartment's design is consistent, with neutral colors and simple materials that create a contemporary and inviting space. The flooring choices, from the kitchen's checkered tiles to the bathroom's hexagonal tiles, add character while maintaining a clean look. The appliances and fixtures are modern and functional, supporting the apartment's overall minimalist theme.\n\n## Damage Inspection\n\n- **Kitchen**: No visible damage is reported in the kitchen area.\n- **Bathroom**: No significant damage is noted in the bathroom. However, the presence of a green and red border on the walls may require updating to match the rest of the minimalist aesthetic.\n\nThe inspection reveals that the apartment is well-maintained with no significant damage. The kitchen and bathroom are in good condition, with modern appliances and fixtures that show no signs of wear or malfunction. The only potential issue is the dated color border in the bathroom, which may not align with the desired minimalist design and could be considered for renovation to achieve a more cohesive look throughout the apartment.",

"mimeType": "text/markdown",

"fileType": "DOCUMENT",

"id": "deef973b-0a39-4037-aad0-b9b5d2708362",

"name": "Content",

"state": "EXTRACTED",

"creationDate": "2024-01-24T03:11:17Z",

"owner": {

"id": "5a9d0a48-e8f3-47e6-b006-3377472bac47"

}

}

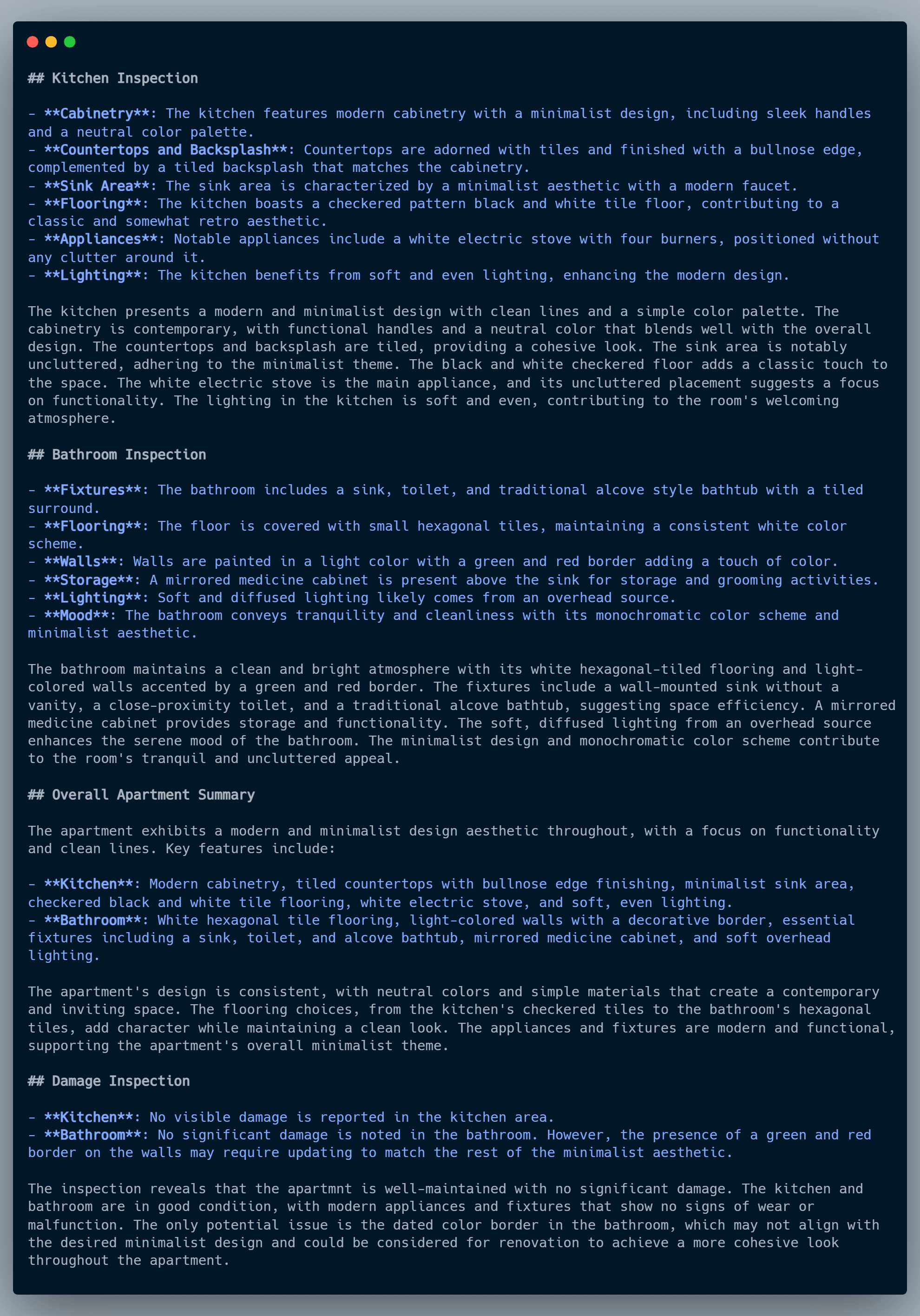

Apartment Inspection Report

You can see the level of detail that is enabled with the GPT-4 Vision model, and how it identified the white electric stove, the hexogonal tiles in the bathroom, and even called out how the colored border in the bathroom doesn't match the overall minimalist style of the apartment.

You can imagine an apartment manager using this publishing method to generate copy for a real estate website, or generating a move-out report, which identifies potential damage.

The possibilities are limitless, by incorporating multimodal models like GPT-4 Vision, with the content publishing capabilities of Graphlit.

Summary

Please email any questions on this tutorial or the Graphlit Platform to questions@graphlit.com.

For more information, you can read our Graphlit Documentation, visit our marketing site, or join our Discord community.